|

8/13/2023 0 Comments Nn sequential cnn

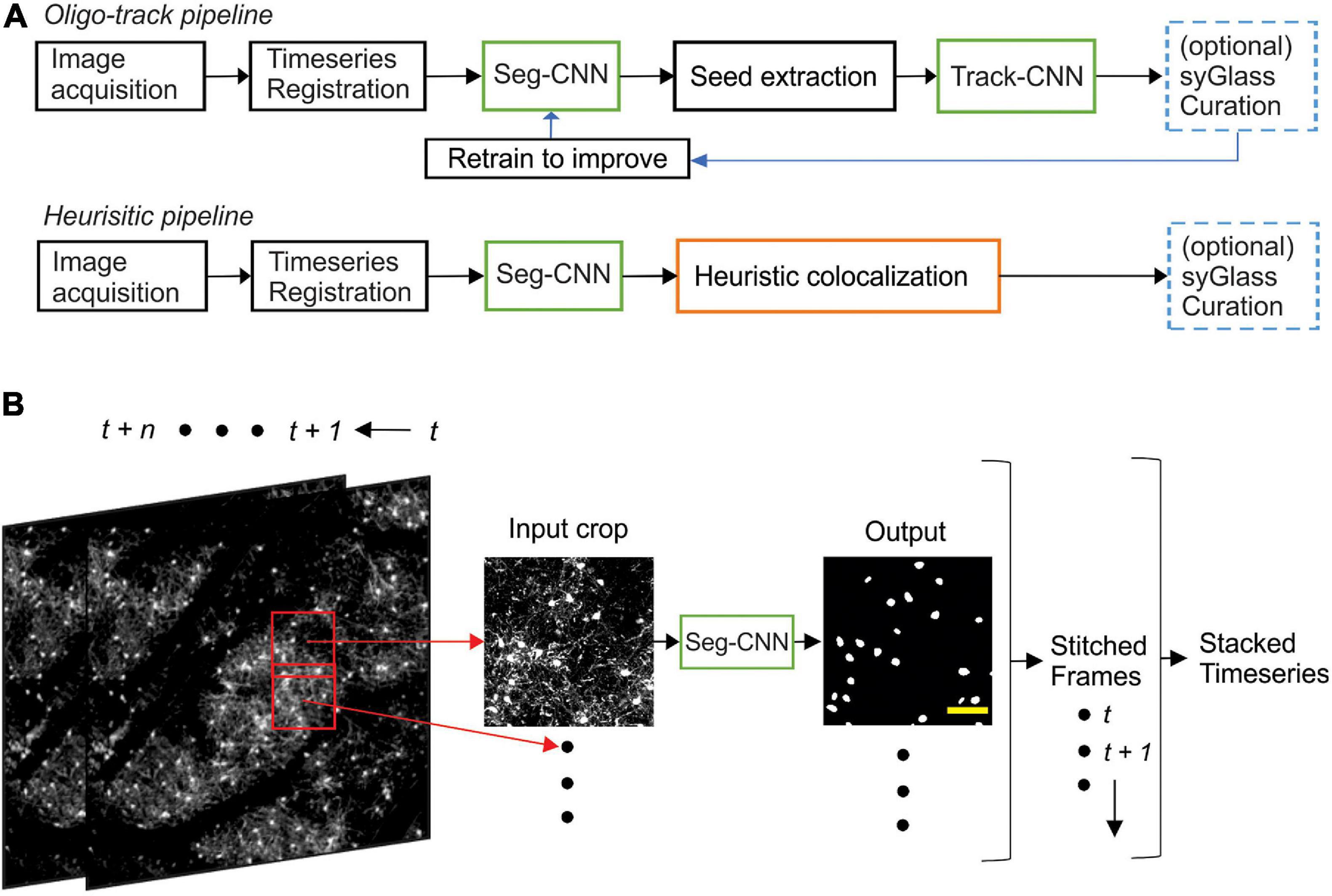

The second method will be used to construct our Keras CNN architectureįinally, we’ll implement our training script and then train a Keras CNN for regression prediction.The first one will be used to load our house price images from disk.We’ll then create two Python helper functions: From there we’ll briefly review our project structure. In the first part of this tutorial, we’ll discuss our house prices dataset which consists of not only numerical/categorical data but also image data as well. Update: This blog post is now TensorFlow 2+ compatible! When I run ‘Simple_CNN.Looking for the source code to this post? Jump Right To The Downloads Section Keras, Regression, and CNNs Rgb_feature_extractor = self.feature_extractorsĭepth_feature_extractor = self.feature_extractors # build the model with two CNN feature extractors using RGB images and depth images # build the model with one CNN feature extractor using RGB images Self.feature_extractors.append(self._build_features_layers(model_func)) Here’s the code of them :ĭef build_model(self, feature_extractor_names):įor feature_extractor_name in feature_extractor_names: Now, I want to factorize common code from the 2 classes and decided to create one CNN base class and 2 derived classes : Simple_CNN and Double_CNN. So, one ‘feature_extractor’ and one ‘fc’ sequential layers.įor the second program, I get one ‘feature_extractor_rgb’, one ‘feature_extractor_depth’ and one ‘fc’ sequential layers. (2): Linear(in_features=32, out_features=2, bias=True) (1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) When I run the first program, I get this for the model : CNN( # Load pre-trained network: choose the model for the pretrained networkįeatures_rgb = rgb.squeeze(-1).squeeze(-1)ĭepth = self.feature_extractor_depth(depth)įeatures_depth = depth.squeeze(-1).squeeze(-1)įeatures = torch.cat((features_rgb, features_depth), dim=1) """ Return the freezed layers of the pretrained CNN specified by model_func parameter.""" _fc_layers = [torch.nn.Linear(2*feature_size, 256),ĭef _build_features_layers(self, model_func): Self.feature_extractor_depth = self._build_features_layers(model_func) Self.feature_extractor_rgb = self._build_features_layers(model_func) Self.fc = torch.nn.Sequential(*_fc_layers) _fc_layers = [torch.nn.Linear(feature_size, 256), Self.feature_extractor = torch.nn.Sequential(*_layers) Here’s an extract of a first version with 2 classes CNN and Double_CNN:įrom torchvision.models import ResNet18_Weightsīackbone = model_func(weights=ResNet18_Weights.DEFAULT) To do that, I’ve one CNN to extract features from the RGB image and one CNN for the depth features and I joint both features to be processed by a fully connected network. I want to design a double CNN like in the following image to process RGB images AND depth images.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed